It’s an intellectual property apocalypse. Gen AI companies have decided copyright law isn’t really for them. Content pirates are operating on an industrial scale. Bots are trying to break into your archives 24/7. And if you actually pay for your streaming services, your friends think you’re a sucker.

These problems are not going to get resolved all at once. The IP crisis is a result of several intersecting phenomena. New technologies are a disruptor, obviously, but only one. Co-operating in the collapse are corporate monopolies, ineffective governance, and thinly disguised contempt for consumers and audiences.

Most solutions for protecting intellectual property tend to be tactical. The focus is on heading off immediate threats. While these larger systems may someday join together in a mutually beneficial ecosystem of profit and mutual respect, in the meantime, best to make sure you’re doors are locked and you have a baseball bat near the front door just in case.

Crawling the web for stolen content has been a standard technique for finding, and removing, pirated IP. But crawling can be technically tedious, and costly. The scale of the battlefield, means human-powered monitoring is rarely effective. But AI (or what your dad called “machine learning”) is making complicated web search accessible and efficient.

Crawling after the pirates

San Francisco-based Redflag AI helps content owners shut down unauthorized use of their digital assets using AI driven search. Along with film and TV, the company work across live broadcast, publishing, and the creator economy. Its tech can search dozens of platforms globally in multiple languages.

Redflag was originally launched as a platform for industrial-scale web crawling and sentiment analysis. In 2022, the company began to use its automated tool to help film companies find pirated content.

“We were able to reduce the expense of looking through the noise of all the pages online of whatever content you’re looking for, and help rule out false positives,” explains Redflag AI CEO Max Eisendrath. “It’s a very efficient type of crawling and we can do this at scale.”

The original focus was primarily on static content, for the likes of publishers, creators, and music artists. In the last two years the company has started helping live broadcasters locate pirated streams, using its proprietary watermarking and fingerprinting tech.

Redflag has also partnered with YouTube, and works with other social platforms, including Facebook and TikTok, to take down content. The collaboration has also enabled AdSense revenue to be redirected back to the original creators.

Taking down pirated content is one thing, but creators often have to suck it up when it comes to lost advertising revenue. Redflag has been working with a number of big YouTube channels and creators and expects to roll out similar monetization features with other social platforms later in the year.

Taking down before the touchdown

Once pirated content is identified, it needs to be removed, which is not always as straightforward as it seems. The legal obligation that CDNs who host illegal content, often – but not always – unwittingly, is pretty roomy. The law generally says that content needs to be taken down “as soon as reasonably possible” but in the case of live broadcast, where the value of the content is measured in minute, a lot of damage can be done before the content is finally pulled.

“It’s a pain to do manually, depending on where you’re trying to send DMCA notices or other sorts of takedown notices,” says Eisendrath. “You might need to send it to four different places. But that kind of calculation and process is automated now.”

For protecting live content, the Redflag embeds an invisible watermark into the stream, that can be user or session-based to tage each unique viewer. The watermark is encoded at the CDN level which enables scaling it to tens of millions of concurrent users.

The sending of takedown notices is automated. During a broadcast, Redflag AI’s webcrawlers search across possible piracy sources looking for the stream. When the specific watermark is identified, it sends a message via an API connection to the CDN which shuts off that individual stream.

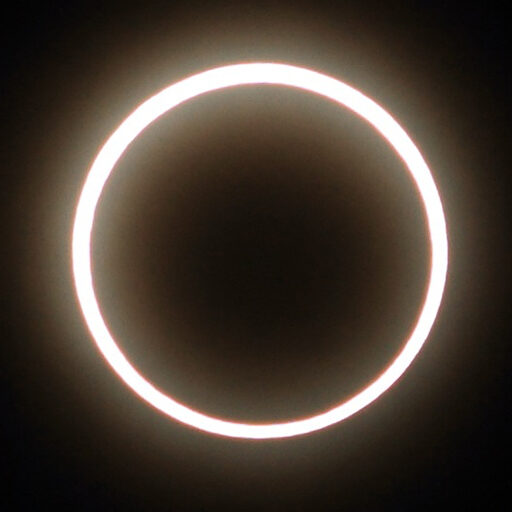

All seeing Cyclops

The suite of tools is aggregated into one platform, called Cyclops, which allows content owners to monitor and track where infringements are taking place videos, live content or static image files.

“This is all provided a typical SAAS dashboard with many different reports and views that they can log in and see. It’s very flexible in terms of how involved content owners want to be in the progress and tweaking the parameters. Or they can just get a weekly or monthly update.”

Cyclops also allows whitelisting of regions or providers. If the studio wants audiences in a specific country to have full access to a film, pirated or not, before its big sequel, there’s nothing stopping them.

“We have pretty complicated white lists in terms of what people want to be seen.”

Redflag AI’s pricing system scales with the size of the catalog that needs to be protected. This makes it a viable tool for smaller creative businesses too. With the vast majority of content in the world being created by SMB’s, democratizing anti-piracy is going to be essential. A batch of t-shirts with a stolen image are just another day at the office for Disney, but for a sole artist, it could mean not making next month’s rent.

Trying to save face – literally

Gen AI is of course the world’s biggest piracy tech, gobbling up every bit of content it can and spewing it out in endless combinations on demand. Proving this theft is stumping rights holders worldwide. We recently saw was Natalie Portman’s face fronting a YouTube ad. Or was it just an AI generated character – some zeroes and ones – that happened to maybe look like Natalie Portman. Who’s to say?

“The likeness question is obviously huge,” says Eisendrath. “To be honest, I don’t think anyone knows how to handle that legally. But once they do, it’s going to be a big thing. We’ve been told by a lot of the bigger players in this industry that they don’t really know how likeness can be totally adjudicated. It’s not even clear if that’s something you can send a DMCA notice about or if it might require a more detailed law suit.”

But Eisendrath is optimistic.

“I’m sure that’s going to get sorted out in the next year and then there’s going to be a lot of stuff to take down.

“The easiest way to do it would be YouTube or Facebook says ‘If there’s an Arnold Schwarzenegger likeness in any video and it doesn’t have his official water mark that shows it was generated by him or his team or someone who owns the IP, then it’s going to get removed.”

Redflag will be releasing a shielding product later this year that will make video assets more difficult to train on. The shield would operate like a form of encryption with the option of selling a decryption key to sell to companies who wanted to license content to for AI training.

“It’s very expensive for them to get through the kind of noise that’s generated by the shielding process to train on it. I think there’s going to be some new economics around that.”